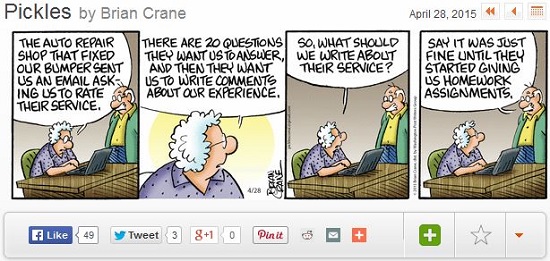

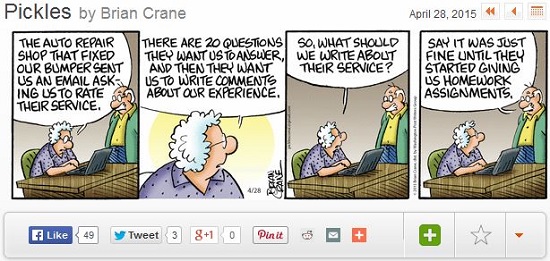

Just because you CAN do something doesn’t mean you SHOULD do something. Unfortunately, the millions of souls around the world writing software are adding functionality a lot faster than overburdened consumers can use it. We can’t think of a better example of this than the now-omnipresent survey that arrives in our Inboxes within microseconds of our buying a product or using a service. The volume of junk snail mail is minuscule compared with that of junk email and junk surveys. Our own productivity and enjoyment of life are victims of this trend.

Well, you say, if we don’t vote for what we want, we are likely to be a victim of those lowlifes who do vote … for bad products and services. The best defense is to delete most all such surveys … heresy coming from a career market researcher, no? Certainly you should delete ones that clearly are just knee-jerk reactions from your suppliers. Worst are the ones that are pure bureaucracy, usually characterized by (1) purely multiple-choice questions with no open-ended ones where some insight may lie, and (2) a preponderance of questions about unimportant aspects but none or few about important ones. A recent one from Stanford Health Care was rife with such useless questions as (1) ease of scheduling your appointment , (2) minutes waited between scheduled appointment time and call to an exam room, (3) minutes waited in the exam room before being seen by a medical person, and (4) friendliness/courtesy of the nurse/assistant; nothing at all about the quality of the doctor’s diagnosis or the outcome of his/her prescribed treatment.

Perhaps even worse were those organizations who should, or even do, encourage feedback to correct their errors or improve their offerings, but then don’t take any action or even thank you. Google Translate, Google Maps, and Spreadshirt (custom T-shirts) come to mind. There is a time-tested principle that the best suppliers are those who listen to their customers and take actions to fix their errors or improve their products or services.